4 min read

Why insurance and healthcare must automate with precision or not at all.

Ask any executive in insurance or healthcare whether AI could transform their customer service operation, and the answer is almost always yes. Ask them whether AI can fully replace their customer service team, and the conversation gets complicated fast.

The promise is genuine: AI offers faster response times, lower costs, and round-the-clock availability. Yet regulated industries operate under a different set of rules than retail. Accuracy is not a nice-to-have; it is a legal and ethical obligation. And here lies the problem: too many AI projects in these sectors fail to confront it head-on.

If automation cannot perform a task with near-perfect accuracy and full repeatability, a human still has to check, correct, and redo that work. The efficiency gain evaporates, and the compliance risk remains.

This blog explores where AI genuinely adds value in regulated customer service, why full replacement remains out of reach, and what a smarter automation strategy looks like in practice.

A Growing Divide Between Leaders and Laggards

The adoption of AI in customer care is accelerating, but unevenly. McKinsey's latest State of Customer Care survey, published in February 2026 and drawing on responses from 440 customer care leaders across geographies, reveals a widening gap between organisations leading the transformation and those still stuck in pilot mode.

The research identifies four archetypes: Leaders, Accelerators, Builders, and Laggards. The defining characteristic of leaders is not simply that they have deployed more AI. It is that they have fundamentally redesigned how work gets done. They treat AI as an operating model transformation, not a technology addition.

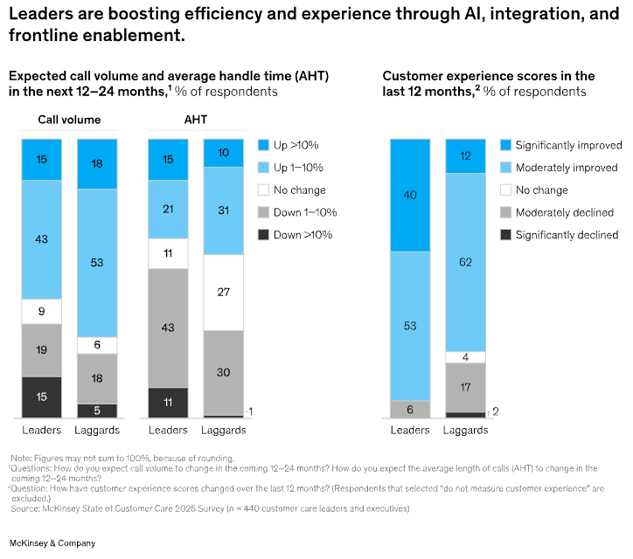

Sixty-seven per cent of leaders have invested in foundational AI at scale, including conversational chatbots, workflow automation, and AI-assisted knowledge retrieval, compared to just 16 per cent of laggards. And the impact is measurable: 40 per cent of leaders reported significantly improved customer experience scores over the past 12 months, compared with only 12 per cent of laggards.

The message from McKinsey is clear: the organisations pulling ahead are not adding AI to existing operations, they are rewiring them. That distinction matters enormously in regulated sectors, where operational discipline, governance, and auditability are non-negotiable.

The Case for Targeted AI Automation

When applied to the right tasks, AI can genuinely transform customer service in insurance and healthcare.

In regulated environments, certain interactions are highly repetitive, well-defined, and low in complexity: policy status enquiries, appointment scheduling, standard eligibility checks, and first-level triage routing. These tasks follow predictable patterns, require consistent outputs, and can be documented with audit trails. When automation is scoped precisely to these workflows, the gains are real.

McKinsey describes this as agentic AI systems that execute specific workflows either autonomously or in collaboration with human agents, freeing experienced staff to focus on complex, high-value interactions. In practice, this means AI handles predictable volume, and humans handle nuance. Nine in ten leading organisations in McKinsey's survey are already scaling AI across core workflows on this basis, while redeploying human capacity to areas where empathy, judgement, and accountability genuinely matter.

The organisations succeeding with AI in customer care are not replacing people. They are redesigning workflows so that people and machines collaborate and each does what they do best.

Gartner reinforces this principle with the concept of high-trust, precision automation: AI should handle repetitive steps with high consistency, while humans retain oversight over exceptions and sensitive cases. This hybrid model preserves efficiency without eroding compliance or customer trust.

The business case for this approach is compelling. McKinsey estimates that AI could unlock up to 60 per cent of addressable care volume, freeing human capacity to focus on interactions that drive genuine value and loyalty, transforming customer service from a cost centre into a revenue driver. But that outcome depends entirely on automation being scoped to tasks where accuracy and repeatability are consistently achievable.

Full Automation Introduces More Risk Than It Removes

Despite the momentum, the idea that AI can fully replace human customer service in insurance or healthcare remains both unrealistic and potentially harmful. The reason is not a lack of ambition; it is a fundamental characteristic of regulated work.

In these sectors, any error, however minor, requires human intervention to identify, correct, and justify. A miscommunication about a policy exclusion, an incorrectly routed clinical enquiry, or an automated response that fails to flag a vulnerable customer can trigger regulatory scrutiny, financial penalty, or direct harm. The moment an automated interaction falls short of near-perfect accuracy, a human must re-validate the entire exchange. At scale, this does not halve the workload; it doubles it.

EY has highlighted that without explainability, transparency, and full auditability, AI solutions struggle to meet the regulatory requirements placed on firms in these sectors. Final accountability for advice and outcomes cannot be delegated to an algorithm. Human oversight is not just a quality control measure; it is a legal and ethical necessity.

Poorly scoped automation does not reduce operational burden in regulated industries; it redistributes it, while adding compliance risk. The efficiency case only holds when automation works reliably, every time.

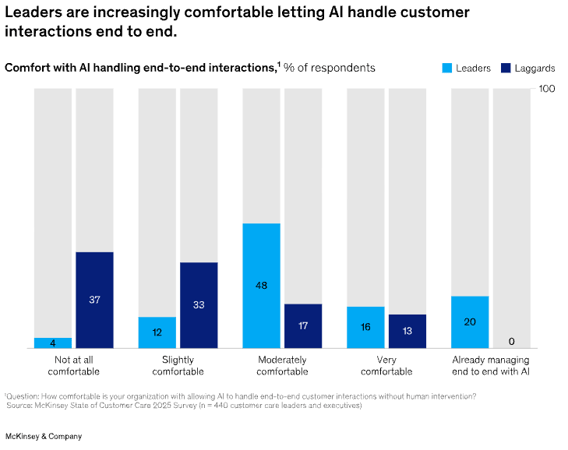

Beyond compliance, there is a trust dimension that is frequently underestimated. McKinsey's research found that 37 per cent of laggard organisations are not at all comfortable with AI handling end-to-end customer interactions, and even among leaders, those most advanced in their AI adoption, 64 per cent still cite customer preference for human contact as a top barrier to digital migration.

The emotional stakes in healthcare and insurance are high. A claimant in crisis, a patient receiving a difficult diagnosis, or a policyholder disputing a rejection is not seeking a quick answer. They are looking for someone who listens, understands context, and takes responsibility. Almost 70 per cent of respondents in McKinsey's survey agreed that empathy and trust will always require human involvement. No current AI system can reliably replicate that, and attempting to automate it without the right foundations risks damaging customer relationships that took years to build.

Conclusion

The opportunity is real, but it is more specific than the headlines suggest. AI delivers genuine value when it is scoped precisely to tasks that can be automated with high accuracy and full repeatability. When that standard is met, automation reduces operational cost, improves consistency, and frees human expertise for the interactions that demand it. When that standard is not met, the gains are illusory, and the risks are compounded.

The organisations leading this transformation, as McKinsey's latest research makes clear, are not those that have deployed the most AI. They are those that have redesigned their operating models around a clear principle: automate what can be done reliably and safely, and ensure humans remain integral to oversight, judgement, and accountability.

For leaders in regulated businesses, the strategic question should not be how to automate everything. It should be about identifying the specific workflows where automation can be trusted to perform, and building the governance, talent strategy, and customer trust required to scale from there.

Advantage in the agentic era will come not from having more AI, but from knowing precisely where to use it and where not to.